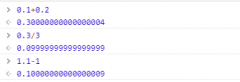

问题描述

我想得到 h=Af??????????来自社交网站上的报告链接的价值.我用这个测试: http://jsfiddle.net/rtnNd/6/ 来检索值,它在我测试时有效.

I am trying to get h=Af?????????? value from reporting link at social networking site. I test with this : http://jsfiddle.net/rtnNd/6/ to retrieve the value, it works when I tested out.

但我不希望用户与之交互.脚本必须自动检索页面并获取它的值.(我使用 Google Chrome Javascript 控制台进行测试)当我尝试使用以下编码时,它确实会拼写页面并分配给值 d 并找到 h 的值,但搜索/替换根本不起作用.为什么 getElementsByClassName 不起作用?

but I don't want user to interact with it. the script have to auto retrieve the the page and get the value of it. ( I test with Google Chrome Javascript Console) when I try with following coding which does scrabble the page and assign to value d and find the value of h, but search/replace doesn't work at all. Why getElementsByClassName is not working in that?

使用 JQuery:

function getParameterByName(name, path) {

var match = RegExp('[?&]' + name + '=([^&]*)').exec(path);

return match && decodeURIComponent(match[1].replace(/+/g, ' '));

}

var URL = 'http://www.facebook.com/zuck';

xmlhttp = new XMLHttpRequest();

xmlhttp.open("GET", URL, true);

xmlhttp.setRequestHeader("Content-type", "application/xhtml+xml");

xmlhttp.send();

xmlhttp.onreadystatechange = function () {

if (xmlhttp.status == 404) {

result = error404;

}

if (xmlhttp.readyState == 4 && xmlhttp.status == 200) {

var d = xmlhttp.responseText;

var jayQuery = document.createElement('script');

jayQuery.src = "//ajax.googleapis.com/ajax/libs/jquery/1.3.2/jquery.min.js";

document.getElementsByTagName("head")[0].appendChild(jayQuery);

var href = $($('.hidden_elem').d.replace('<!--', '').replace('-->', '')).find('.itemAnchor[href*="h="]').attr('href');

var fbhvalue = getParameterByName('h', href);

alert(fbhvalue);

}

}

我出现这个错误,错误:未捕获的类型错误:无法调用未定义的方法'替换'",即使我添加了 jquery.

I go this error, "ERROR: Uncaught TypeError: Cannot call method 'replace' of undefined", Even I add the jquery.

没有 JQuery:

function getParameterByName(name, path) {

var match = RegExp('[?&]' + name + '=([^&]*)').exec(path);

return match && decodeURIComponent(match[1].replace(/+/g, ' '));

}

var URL = 'http://www.facebook.com/zuck';

xmlhttp = new XMLHttpRequest();

xmlhttp.open("GET", URL, true);

xmlhttp.setRequestHeader("Content-type", "application/xhtml+xml");

xmlhttp.send();

xmlhttp.onreadystatechange = function () {

if (xmlhttp.status == 404) {

// result = error404;

}

if (xmlhttp.readyState == 4 && xmlhttp.status == 200) {

d = xmlhttp.responseText;

checker(d);

}

}

function checker(i) {

i.value = i;

var hiddenElHtml = i.replace('<!--', '').replace('-->', '').find('.itemAnchor[href*="h="]').attr('href');

var divObj = document.createElement('div');

divObj.innerHTML = hiddenElHtml;

var itemAnchor = divObj.getElementsByClassName('itemAnchor')[0];

var href = itemAnchor.getAttribute('href');

var fbId = getParameterByName('h', href);

alert(fbId);

}

当我运行它时,我得到了一个名为object has no method 'find'"的错误.

When I run it, I got the error called "object has no method 'find'".

为什么在调用 xmlhttp.onreadystatechange 语句后 getElementsByClassName、getParameterByName、Jquery、getAttribute 不起作用?请你指出我.有没有办法找出如何获得价值?感谢您阅读我的问题并回答!

Why getElementsByClassName, getParameterByName, Jquery, getAttribute are not working after xmlhttp.onreadystatechange statement called? please can you point me out. Is there any way to find out how to get value? Thanks for reading my question and answering!

更新:(如果我在个人资料页面上访问,在 Google Chrome 的 javascript 控制台中运行以下脚本,它会获得 h 值 - 但我真的不希望那样...我想指定用户个人资料名称,它必须自动检索并得到它的h值.)

Updated: ( If i visited on profile page, run following script in javascript console of Google Chrome, it gots the h value - but I really don't want that way... I want to specified the user profile name, it has to retrieve automatically and get the h value of it.)

var jayQuery = document.createElement('script');

jayQuery.src = "//ajax.googleapis.com/ajax/libs/jquery/1.3.2/jquery.min.js";

document.getElementsByTagName("head")[0].appendChild(jayQuery);

function getParameterByName(name, path) {

var match = RegExp('[?&]' + name + '=([^&]*)').exec(path);

return match && decodeURIComponent(match[1].replace(/+/g, ' '));

}

var href = $($('.hidden_elem')[1].innerHTML.replace('<!--', '').replace('-->', '')).find('.itemAnchor[href*="h="]').attr('href');

alert(href);

var fbId = getParameterByName('h', href);

alert(fbId);

推荐答案

如果你在 facebook 之外使用这个脚本你肯定会得到 SOP,由于安全原因限制见:访问控制允许来源不允许

If you use this script outside the facebook you will definitely get SOP, It is limited due to security reasons see this: Access Control Allow Origin not allowed by

所以,假设你得到了 response string xmlhttp.responseText,所以你不能使用一些只适用于 DOM 对象的 JQuery 或 JavaScript 函数,但它很简单细绳.例如,您使用了 find()、attr() 等不适用于字符串的方法.你可以把它当作一个字符串来玩,仅此而已.

So, Let's imagine you got the response string xmlhttp.responseText, so you cannot use some JQuery or JavaScript functions which only work with DOM objects but it is just plain string. For example, you used find(), attr(), methods which don't work with strings. You can play with it as a string and nothing more.

这篇关于XMLHttpRequest onreadystatechange 语句后 Javascript Object Error 的回调行为的文章就介绍到这了,希望我们推荐的答案对大家有所帮助,也希望大家多多支持跟版网!

大气响应式网络建站服务公司织梦模板

大气响应式网络建站服务公司织梦模板 高端大气html5设计公司网站源码

高端大气html5设计公司网站源码 织梦dede网页模板下载素材销售下载站平台(带会员中心带筛选)

织梦dede网页模板下载素材销售下载站平台(带会员中心带筛选) 财税代理公司注册代理记账网站织梦模板(带手机端)

财税代理公司注册代理记账网站织梦模板(带手机端) 成人高考自考在职研究生教育机构网站源码(带手机端)

成人高考自考在职研究生教育机构网站源码(带手机端) 高端HTML5响应式企业集团通用类网站织梦模板(自适应手机端)

高端HTML5响应式企业集团通用类网站织梦模板(自适应手机端)